Pure language processing is without doubt one of the hottest matters of dialogue within the AI panorama. It is a vital software for creating generative AI purposes that may create essays and chatbots that may work together personally with human customers. As the recognition of ChatGPT soared increased, the eye in the direction of greatest NLP fashions gained momentum. Pure language processing focuses on constructing machines that may interpret and modify pure human language.

It has advanced from the sphere of computational linguistics and makes use of pc science for understanding rules of language. Pure language processing is an integral facet of reworking many components of on a regular basis lives of individuals. On prime of it, the industrial purposes of NLP fashions have invited consideration to them. Allow us to study extra about essentially the most famend NLP fashions and the way they’re totally different from one another.

What’s the Significance of NLP Fashions?

The seek for pure language processing fashions attracts consideration to the utility of the fashions. What’s the purpose for studying about NLP fashions? NLP fashions have turn into essentially the most noticeable spotlight on the earth of AI for his or her totally different use instances. The widespread duties for which NLP fashions have gained consideration embody sentiment evaluation, machine translation, spam detection, named entity recognition, and grammatical error correction. It could actually additionally assist in subject modeling, textual content technology, data retrieval, query answering, and summarization duties.

All of the prime NLP fashions work by identification of the connection between totally different parts of language, such because the letters, sentences, and phrases in a textual content dataset. NLP fashions make the most of totally different strategies for the distinct levels of knowledge preprocessing, extraction of options, and modeling.

The info preprocessing stage helps in enhancing the efficiency of the mannequin or turning phrases and characters right into a format understandable by the mannequin. Knowledge preprocessing is an integral spotlight within the adoption of data-centric AI. A number of the notable methods for knowledge preprocessing embody sentence segmentation, stemming and lemmatization, tokenization, and stop-word removing.

The characteristic extraction stage focuses on options or numbers that describe the connection between paperwork and the textual content they comprise. A number of the standard methods for characteristic extraction embody bag-of-words, generic characteristic engineering, and TF-IDF. Different new methods for characteristic extraction in widespread NLP fashions embody GLoVE, Word2Vec, and studying the necessary options throughout coaching means of neural networks.

The ultimate stage of modeling explains how NLP fashions are created within the first place. Upon getting preprocessed knowledge, you may enter it into an NLP structure which helps in modeling the info for undertaking the specified duties. For instance, numerical options can function inputs for various fashions. You too can discover deep neural networks and language fashions as essentially the most notable examples of modeling.

Wish to perceive the significance of ethics in AI, moral frameworks, rules, and challenges? Enroll now within the Ethics Of Synthetic Intelligence (AI) Course

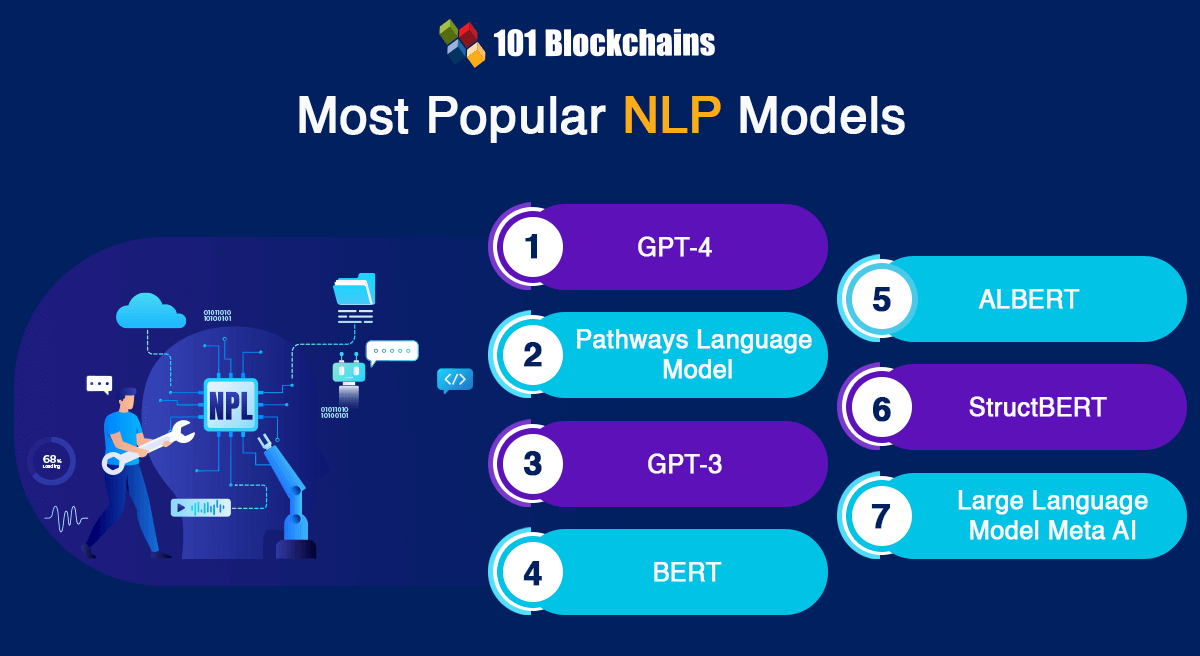

Most In style Pure Language Processing Fashions

The arrival of pre-trained language fashions and switch studying within the area of NLP created new benchmarks for language interpretation and technology. Newest analysis developments in NLP fashions embody the arrival of switch studying and the appliance of transformers to several types of downstream NLP duties. Nevertheless, curiosity concerning questions corresponding to ‘Which NLP mannequin provides the most effective accuracy?’ would lead you in the direction of a few of the widespread mentions.

You could come throughout conflicting views within the NLP neighborhood concerning the worth of huge pre-trained language fashions. However, the newest developments within the area of NLP have been pushed by huge enhancements in computing capability alongside discovery of latest methods for optimizing the fashions to attain excessive efficiency. Right here is a top level view of essentially the most famend or generally used NLP fashions that it is best to be careful for within the AI panorama.

-

Generative Pre-Skilled Transformer 4

Generative Pre-trained Transformer 4 or GPT-4 is the most well-liked NLP mannequin out there proper now. As a matter of truth, it tops the NLP fashions record as a result of reputation of ChatGPT. When you’ve got used ChatGPT Plus, then you could have used GPT-4. It’s a massive language mannequin created by OpenAI, and its multimodal nature ensures that it could actually take photos and textual content as enter. Due to this fact, GPT-4 is significantly extra versatile than the earlier GPT fashions, which may solely take textual content inputs.

In the course of the growth course of, GPT-4 was educated to anticipate the following content material. As well as, it has to undergo fine-tuning by leveraging suggestions from people and AI techniques. It served as the perfect instance of sustaining conformance to human values and specified insurance policies for AI use.

GPT-4 has performed a vital position in enhancing the capabilities of ChatGPT. However, it nonetheless experiences some challenges that have been current within the earlier fashions. The important thing benefits of GPT-4 level to the truth that it has 175 billion parameters, which makes it 10 instances greater than GPT-3.5, the mannequin behind ChatGPT functionalities.

Excited to find out about ChatGPT and different AI use instances? Enroll now in ChatGPT Fundamentals Course

The following addition amongst greatest NLP fashions is the Pathways Language Mannequin or PaLM. Some of the hanging highlights of the PaLM NLP mannequin is that it has been created by the Google Analysis crew. It represents a serious enchancment within the area of language know-how, which has nearly 540 billion parameters.

The coaching of PaLM mannequin entails environment friendly computing techniques generally known as Pathways, which assist in guaranteeing coaching throughout totally different processors. Some of the essential highlights of PaLM mannequin is the scalability of its coaching course of. The coaching course of for PaLM NLP mannequin concerned 6144 TPU v4 chips, which makes it some of the huge TPU-based coaching fashions.

PaLM is without doubt one of the widespread NLP fashions with the potential to revolutionize the NLP panorama. It used a mixture of totally different sources, together with datasets in English and lots of different languages. The datasets used for coaching PaLM mannequin embody books, conversations, code from Github, internet paperwork, and Wikipedia content material.

With such an intensive coaching dataset, PaLM mannequin serves glorious efficiency in language duties corresponding to sentence completion and query answering. However, it additionally excels in reasoning and can assist in dealing with advanced math issues alongside offering clear explanations. By way of coding, PaLM is much like specialised fashions, albeit with the requirement of much less code for studying.

GPT-3 is a transformer-based NLP mannequin that would carry out question-answering duties, translation and composing poetry. Additionally it is one of many prime NLP fashions that may work on duties involving reasoning, like unscrambling phrases. On prime of it, latest developments in GPT-3 supply the flexibleness for writing information and producing codes. GPT-3 has the aptitude for managing statistical dependencies between totally different phrases.

The coaching knowledge for GPT-3 included greater than 175 billion parameters alongside 45 TB of textual content sourced from the web. This characteristic makes GPT-3 one of many largest pre-trained NLP fashions. On prime of it, one other fascinating characteristic of GPT-3 is that it doesn’t want fine-tuning to carry out downstream duties. GPT-3 makes use of the ‘textual content in, textual content out’ API to assist builders reprogram the mannequin through the use of related directions.

Wish to study concerning the fundamentals of AI and Fintech, Enroll now in AI And Fintech Masterclass

-

Bidirectional Encoder Representations from Transformers

The Bidirectional Encoder Representations from Transformers or BERT is one other promising entry on this NLP fashions record for its distinctive options. BERT has been created by Google as a way to make sure NLP pre-training. It makes use of the transformer mannequin or a brand new neural community structure, which leverages the self-attention mechanism for understanding pure language.

BERT was created to resolve the issues related to neural machine translation or sequence transduction. Due to this fact, it may work successfully for duties that remodel the enter sequence into output sequence. For instance, text-to-speech conversion or speech recognition are a few of the notable use instances of BERT mannequin.

Yow will discover an affordable reply to “Which NLP mannequin provides the most effective accuracy?” by diving into particulars of transformers. The transformer mannequin makes use of two totally different mechanisms: an encoder and a decoder. The encoder works on studying the textual content enter, whereas the decoder focuses on producing predictions for the duty. It is very important be aware that BERT focuses on producing an efficient language mannequin and makes use of the encoder mechanism solely.

BERT mannequin has additionally proved its effectiveness in performing nearly 11 NLP duties. The coaching knowledge of BERT contains 2500 million phrases from Wikipedia and 800 million phrases from the BookCorpus coaching dataset. One of many major causes for accuracy in responses of BERT is Google Search. As well as, different Google purposes, together with Google Docs, additionally use BERT for correct textual content prediction.

Pre-trained language fashions are one of many distinguished highlights within the area of pure language processing. You possibly can discover that pre-trained pure language processing fashions help enhancements in efficiency for downstream duties. Nevertheless, a rise in mannequin dimension can create issues corresponding to limitations of GPU/TPU reminiscence and prolonged coaching instances. Due to this fact, Google launched a lighter and extra optimized model of BERT mannequin.

The brand new mannequin, or ALBERT, featured two distinct methods for parameter discount. The 2 methods utilized in ALBERT NLP mannequin embody factorized embedding parameterization and cross-layer parameter sharing. Factorized embedding parameterization entails isolation of the dimensions of hidden layers from dimension of vocabulary embedding.

However, cross-layer parameter sharing ensures limitations on development of quite a lot of parameters alongside the depth of the community. The methods for parameter discount assist in decreasing reminiscence consumption alongside rising the mannequin’s coaching pace. On prime of it, ALBERT additionally presents a self-supervised loss within the case of sentence order prediction, which is a distinguished setback in BERT for inter-sentence coherence.

Change into a grasp of generative AI purposes by growing expert-level abilities in immediate engineering with Immediate Engineer Profession Path

The eye in the direction of BERT has been gaining momentum because of its effectiveness in pure language understanding or NLU. As well as, it has efficiently achieved spectacular accuracy for various NLP duties, corresponding to semantic textual similarity, query answering, and sentiment classification. Whereas BERT is without doubt one of the greatest NLP fashions, it additionally has scope for extra enchancment. Apparently, BERT gained some extensions and remodeled into StructBERT by incorporation of language buildings within the pre-training levels.

StructBERT depends on structural pre-training for providing efficient empirical outcomes on totally different downstream duties. For instance, it could actually enhance the rating on the GLUE benchmark for comparability with different revealed fashions. As well as, it could actually additionally enhance accuracy and efficiency for question-answering duties. Identical to many different pre-trained NLP fashions, StructBERT can help companies with totally different NLP duties, corresponding to doc summarization, query answering, and sentiment evaluation.

-

Massive Language Mannequin Meta AI

The LLM of Meta or Fb or Massive Language Mannequin Meta AI arrived within the NLP ecosystem in 2023. Often known as Llama, the massive language mannequin of Meta serves as a sophisticated language mannequin. As a matter of truth, it’d turn into some of the widespread NLP fashions quickly, with nearly 70 billion parameters. Within the preliminary levels, solely accredited builders and researchers may entry the Llama mannequin. Nevertheless, it has turn into an open supply NLP mannequin now, which permits a broader neighborhood to make the most of and discover the capabilities of Llama.

One of many necessary particulars about Llama is the adaptability of the mannequin. Yow will discover it in numerous sizes, together with the smaller variations which make the most of lesser computing energy. With such flexibility, you may discover that Llama presents higher accessibility for sensible use instances and testing. Llama additionally presents open gates for attempting out new experiments.

Essentially the most fascinating factor about Llama is that it was launched to the general public unintentionally with none deliberate occasion. The sudden arrival of Llama, with doorways open for experimentation, led to the creation of latest and associated fashions like Orca. New fashions primarily based on Llama used its distinct capabilities. For instance, Orca makes use of the excellent linguistic capabilities related to Llama.

Excited to study the basics of AI purposes in enterprise? Enroll now within the AI For Enterprise Course

Conclusion

The define of prime NLP fashions showcases a few of the most promising entries out there proper now. Nevertheless, the fascinating factor about NLP is that you’ll find a number of fashions tailor-made for distinctive purposes with totally different benefits. The expansion in use of NLP for enterprise use instances and actions in on a regular basis life has created curiosity about NLP fashions.

Candidates making ready for jobs in AI must find out about new and present NLP fashions and the way they work. Pure language processing is an integral facet of AI, and the repeatedly rising adoption of AI additionally presents higher prospects for reputation of NLP fashions. Be taught extra about NLP fashions and their parts proper now.